10/25 GbE Cable De-mystification

- Fred

- Jul 18, 2023

- 9 min read

Way back in the mists of time, when I was a green Novell CNE, I started working on Networking cards in 286 and 386 Novell servers when Netware was all the Open Systems computing rage.

The most common cable back then was 10 Mb Ethernet via 3Com 3C509-TPO NICs.

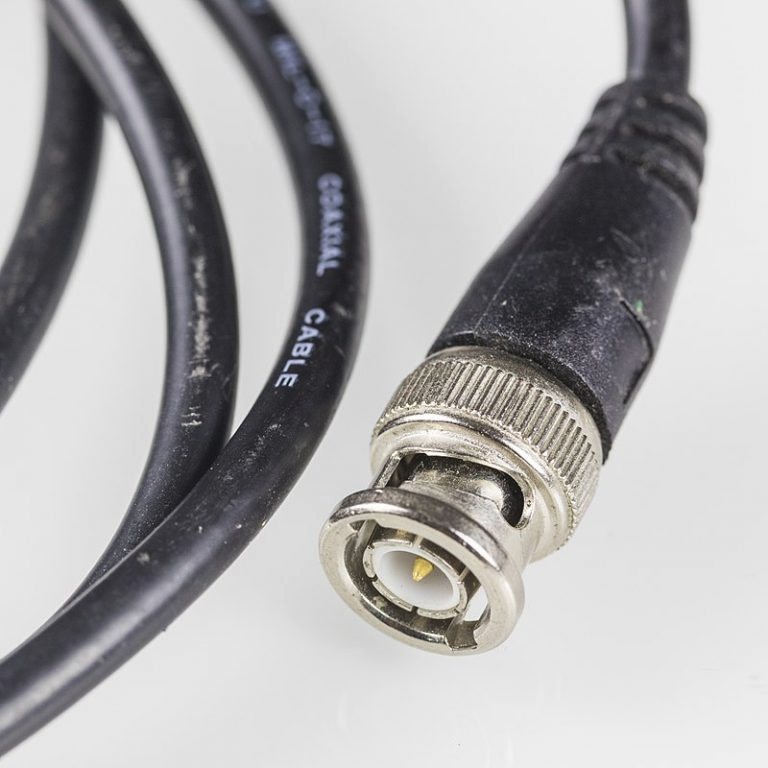

However, even before these 3Com NICs made Ethernet common with a softer easier to plug in RJ-45 cable, we used to connect Novell servers via RG-58A/U Co-Ax cable that was 50 Ohm fare with BNC-T and BNC Barrel connectors.

This cable was called "Thin" Ethernet and had a BNC Terminator at the end of one the two jacks on a client side BNC T-Connector if no other cable was being connected for another computing client (another downstream Desktop, usually).

These cables all had a tap off of one main cable and these things were a big pain in the ass if you had a lot of clients you needed to feed on one main cable feed.

Nedbank in South Africa was where I cut my Netware teeth and my office conversions for them in their Sandton and Johannesburg office buildings were on a per floor basis in them early days.

These servers were not housed in a Computer room back then either, that was reserved for their IBM mainframes and the smaller AS400 series systems as well as the odd duck HoneyWell and Burroughs Poll select Mainframes that Nedcor, Perm and Nedfin used to run their various ATM networks on.

The Netware server in them days went next to the printer all the desktops were printing to from Lotus Smartsuite using Ami Pro word processors or Lotus 123 Spreadsheet software.

I was never a Microsoft Office fan until IBM had screwed the whole Lotus Smartsuite software package up real good and by then we had no choice.

I used to connect the cable segments for access to the Netware servers with barrel connectors and these were where signal losses occurred if these connectors came with defects or were twisted loose somehow - or even trampled on deliberately.

I remember doing a lot of measuring back then of 50 and 20 foot Thin Ethernet Co-Ax cable segments that came from a T-Connector tap near to the target office cubicle back when I installed Novell Netware servers for file and print station purposes for Windows for Workgroups 3.11 clients running MS-DOS 5 or DR-DOS 6 (if I had my way it was DR-DOS 6 every time).

Microsoft DOS sucked Ass big time compared with the Digital Research variant of DOS by the way and I used to tell all my pals who worked for Microsoft that MS-DOS was DROSS.

I would then collapse in heaps of hand slapping side splitting laughter nobody else could fathom at this quip escaping my lips.

Tears would stream down my cheeks it was so bad!

Back then I was also a SCO UNIX guru and had me a grand old time integrating both Windows for Workgroups 3.11 and SCO Unix desktops into Netware setups as server clients.

I was so good at this lark that Novell even bought Bell labs UNIX from AT&T and called it UnixWare which had a pretty X-Windows GUI.

The guy Novell sent from Provo to gawk at my SCO ACE Netware client I wrote for Denel Aviation myself had them all excited for some reason I could never fathom.

Back then I worked for a company called Technetics who wrote a lot of code for Novell and we even manufactured our own Netware server we called a Netlan 5000 which we sold to South African Telkom who I had previously worked for in their Research lab as an electronic design engineer before I joined Technetics.

Telkom had 10,000 Novell Netware 3.11 servers by the time we realized a hardware router with routing protocols was gonna be needed.

You have no idea what the routing table on /etc/hosts looked like on each host back then when one of them went down which was often on that RG58A/U Thin Ethernet cable back then.

This move of mine from electronics into computing happened because the P.OT.E.L.iN lab I worked for in Pretoria sent me to the USA to investigate using Netware 2.11 for our P-CAD operations setup we were using to design our electronics goodies with.

We needed to share design objects each CAD engineer was working on to save time on projects.

Soon I was hooked on these new open systems compute devices and left electronics far behind me in a deep road runner wake yelling "neep-neep" as I went.

In any event, in my Netware adventures I dabbled with Arcnet, Thick Ethernet and all manner of Network cards and I even worked for the Token Ring specialists Madge Networks at some point and led them to purchasing an Israeli Ethernet company called Lannet back in 1995.

I saw Token Ring was not the way to big bucks even back then.

The point of all this background is to relay that I have dabbled with all x16, x32 and x64 server network cable connectivity options from day one, including Mainframe systems and even IBM AS400 stuff.

I wrote a lot of software that encapsulated SNA and TCP/IP over IPX and SPX and then had me some Multi-Protocol IS-IS routing adventures in a product we wrote for Novell called MPR 3.0 (Multi-Protocol-Routing) even before Cisco was thought of as a networking company.

I even tried to convince 3Com that Routing was where the big bucks was gonna be but they failed to heed my advice and they stupidly seeded that opportunity to Cisco and Wellfleet.

I did dev work on the 3Com 6000 series routers at Standard Chartered Bank before they chucked in the towel on that shit and that was pretty disappointing for them and for me in light of what Cisco did with it all.

Seems funny now that little factoid....

So in any event, I came to take Ethernet connectivity at 1/10/25 GbE levels as a given over the years when I switched from Ethernet networking to Storage systems using Fiber Channel and kinda did not soak up the nuanced differences much with high speed Ethernet at these speeds other than preferring Optical LC Cables matched with Optical Transceivers for iSCSI storage gigs I was working on with my various gigs at Hitachi, EMC and NetApp.

In my mind nothing beats LC optical cables in terms of troubleshooting and reliability but noticed a lot of Server Appliance systems were fond of using 1, 3, 5, 7 or 10M DAC Twinax copper cables as they came with permanently fixed to the cable transceivers you just plugged in to the NIC and switch ports.

In time I grew to love AOC cables with the SFP's built into them, even more than LC stuff.

Some of these Copper DAC Twinax cables can be very noisy if not made properly or a grounding fault occurs with them - so for that reason I am not a fan of them thangs.

I tend to advise customers to use AOC or LC cables with an Optical 10 GbE or 25 GbE LC OM3 or OM4 Cables of 2M or 3M lengths with Optical 10GbE SFP+ Transceivers or the SFP28 Optical Transceivers either end of the cable.

With LC you can actually get away with using an OM3 or an OM4 cable if it is a good one, just the distances for OM3 are much shorter.

Nutanix is one of those manufacturers of Server appliances that only come with Copper Twinax DAC copper cables of 1,3,5 or 7 Meters lengths or AOC SFP28 cables for 25 GbE use.

If you want Optical Ethernet LC-UPC cables with their gear you need to source your own LC cables and the SFP+ or SFP28 Optical Transceivers and in fact I advise you not order any HCI gear with the HCI manufacturers cables due to switch challenges.

Get your Switch approved cable and SFP combos - the easy button is stuff from Finisar as it works with most switches and NICs.

Fortunately, a LC Cable for 10 GbE and 25 GbE can be OM3 or OM4 variants but generally speaking 10 GbE cables are usually OM3 LC-PC cables and cables for 25/40/100GbE are generally speaking OM4 LC-UPC type cables.

The OM4 cable can usually also do 10 GbE with 10GbE SFP+ Optical transceivers per the spec sheets from Cisco and Finisar.

The OM3 LC-PC cable works with a 10GbE SFP+ or an SFP28 optical Transceiver by the way just the transmission distances vary - OM3 can go to 70M while OM4 can do 100M cable lengths.

For 25GbE you need to generally speaking match a SFP28 AOC cable with a SFP28 Transceiver if you just want a quick fix, one SKU option, which is the most popular solution out there as these AOC cables come with transceivers fixed at each end of the cable.

Actually an OM4 LC-UPC to LC-UPC cable will connect 10/25/40/100 GbE with many different types of Optical Transceiver (SFP+, SFP28, GBIC100 etc.) and is also compatible with QSFP+ but QSFP+ has a wider and different connector.

Obviously for 40/100G speeds you will need a suitable 25/40G capable Transceiver pair.

Technically both OM3 and OM4 Cables will support 10/25 GbE but for 25GbE use you will need a SFP28 Active Optical Cable (AOC) with a suitable SFP28 transceiver on each end as one part that comes with everything unless you are a Sado-Masochist in which case you can dabble with both OM3 LC and OM4 LC Cables but must match the transceiver to the speed you want (10G is SFP+ and 25G is SFP28).

If you insist on custom Fiber LC for 25 GbE it can be done by the way.

You will need the Finisar SFP28 Transceiver P/N SFP28-25GSR-85 and the OM4 LC cable P/N OM4LCDX that comes in lengths of 0.2M, 0.5M, 1M, 1.5M, 2M, 3M, 5M, 7M, 10M, 15M, 20M, 30M and 50M.

This will work in Intel 710/810 and Nvidia Mellanox CX6 NIC's as well as ALL switch manufacturers gear (Cisco, Arista, Juniper, Aruba, Mellanox, Netgear, Dell, H3C etc.)

This is my preferred work with most things networking option if there are distances in the data center that exceed 90M.

The Finisar SFP28 transceivers with cables packages start from $39 and the cables are from $5.40 depending on length for your LC builds.

Just get it all from Finisar if you go this route and be done with it.

If you consider networking problems on servers running 10/25 GbE - 99% of the issues are usually to be found with network cabling issues.

This is why I prefer Optical AOC or LC solutions because once they are running, moving them will not cause noise and signal loss if they are all inserted properly in the NIC side.

DAC and Copper Twinax on the other hand is a network engineers nightmare.

In fact in my experience ALL 10BaseT Copper setups for HCI deployments fail to meet stable operating standards I would expect to enjoy for a production system.

Sure, technically it works but do not breathe or let rodents run anywhere near the cables and you will be shocked how many data centers have rodents.

My rodent cams in some big company Data Centers I can write books of legends about and would make your hair stand on end!

I cannot tell you how many times a customer suddenly has big network problems due to moving systems, pulling in new equipment next to your systems with all sorts of cabling and that Copper stuff, if it gets disturbed or nibbled on by rodents comes with potentially disastrous results.

As a result of my last 25 years of Data Center experience, I can say give me AOC or LC optical every time! Copper really is for the sado-masochists or the birds!

Pre-sales guys love DAC Twinax cables for 10 GbE as it is easy and cheap, but it is not wise if stability of operations is a goal you want to reach.

I just experienced this at a customer in Sacramento while they were pulling in the DAC cables for their new G8 gear, two servers on the legacy G6 gear died because it disturbed the networking cables on both the servers in one 2U block.

Even though the customer was doing the cable moving work, we got the blame.

I guess just Observing this work in person can be a crime too....

Below is a table of my recommended connection options for SFP+ AOC and SFP28 AOC.

Take serious note of the first cable in the below table......That is indeed a 10G AOC Cable with SFP+ transceivers built in (with the OM4 Optical goodies!!)

For Nutanix Nodes at 10G I recommend:

https://www.fs.com/products/30889.html at $27 FS P/N:SFPP-A003 is the way to go.

They also have 1M and 2M up to 30M long cables.

For Nutanix stuff running at 25G I recommend Nutanix SFP28 AOC cables with SFP28 transceivers or https://www.fs.com/products/68336.html These also come in lengths from 1M up to 30M and at $53 for the 3M cable with transceivers is a sweet deal.

If you want to be virtually guaranteed this will work with any switch, get the Finisar cable with transceivers package.

These assume you are using Broadcom, Intel or Mellanox 10/25G NICs that you ordered with your Nutanix nodes when you bought them.

These Finisar AOC cables with the Transceivers work in many switches but not all of them, remember for HCI action you need a Data Center class switch which means Cisco Catalyst or the FEX 2000 series is not supported and is asking for trouble.

No fabric extender class switches are supported with Hyper converged systems by the way.

I always recommend Nvidia Mellanox 2010/2100/2700 switches or the Aruba CX 6000 or CX 8000 series data center class switches for HCI traffic by the way. Cisco Nexus FX93180 comes in as a third class option.....You do need the latest NX OS for HCI traffic on that beast by the way - the old NX-OS was found to have serious bugs in it.

You can also buy separate OM3 or OM4 cables to work with 10G SFP+ optical transceivers by the way - also better than DAC Twinax cables by a long shot but the cheaper and easier easy button are the AOC Cables with 10G SFP+ Or SFP28 Optical transceivers built in to them....

Many options to choose here...

Dickey cables are tricky to deal with and impact the user perception and uptime from a user perspective, so try not go there unless it's a devops platform.

Comments